I plan on making this a 2 part blog series which will go through the following topics

- Why Jupyter for threat hunting and setting up Jupyter with Msticpy and MDE

- Example host investigation Notebook with example msticpy custom queries

The Why

When it comes to Threat Hunting how many times have you seen an analyst with a giant onenote or confluence page filled with queries that everyone just periodically runs or even adhoc and analyses the output, only to do the same thing again next time with mininal tuning/excluding legitimate activity?

It’s not uncommon to see little documentation or sharing on how to analyse the data collected to detect potential intrusions in a network. Especially when the team is very diverse from a technical and expertise perspective. This gets even more complex in the MSP space where different analysts rely on different tools to build threat hunting queries for clients owing to different clients having different EDRs and SIEMs.

This gets more confusing when there are not concrete examples of how to determine what is malicious in the output of your query, meaning that inexperienced analysts may miss malicious activity due to not understanding how to analyse the output.

This traditional threat hunting does not scale in the MSP space where you may need to manually login to multiple different EDR consoles which takes time and thats without taking into account the time to run the queries to get the data and the brainpower required to understand what’s normal in the environment. This also leads to analysts working in silos and not collaborating to the best of their ability on hypothesis and query development.

I’ve found Jupyter notebooks a good way to fix these issue plus many more, especially within the MSP space, for me Jupyter notebooks addresses the following issues.

Data Persistence and replicability

Using Jupyter makes it possible to see exactly what queries and analysis has been run, it also makes it possible for less experienced analysts to easily follow along with an entire threat hunt and level up their skills.

Data Normalisation and Scalability

For MSPs in particular using Jupyter allows you to uitilise EDR Vendors APIs to run the queries and then collect the data for multiple EDR vendors e.g Cybereason, MDE etc.

You can then normalise the field names and then use the normalised data to run the same notebook for different EDR vendors. This means analysts can build queries for suspicious activity with pandas in a jupyter notebook and have it applied to multiple different EDR vendors all at once, allowing them to spend more time developing more advanced queries and develop baselines of known good activity. This logic also applies for internel teams that have a SIEM and EDR.

More Advanced Queries In a Shorter Timeframe

Instead of analysing the output directly within the EDR console via the browser, which doesnt always work out, (think browser crashing) analysts can build their query and then have the data directly available to them in a pandas dataframe, this data can also be automatically collected and stored centrally depending on your setup, allowing analysts to not have to wait for the data collection stage of the hunt and can go straight to the data analysis giving them more time to develop advanced analysis, alot of this analysis can be turned into functions, making the learning curve for more junior analysts much lower and the code reusable for multiple hunts where there is Mitre TTP crossover.

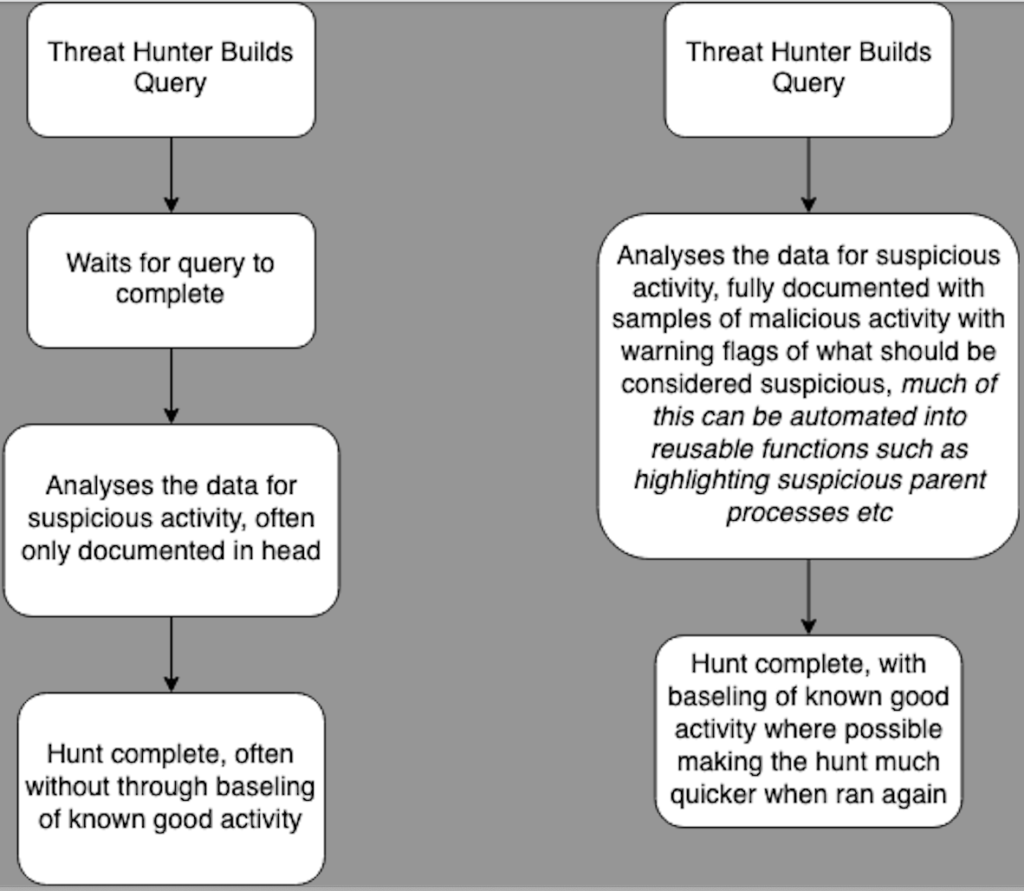

you can see the old way on the left compared with the notebook way on the right.

External Data Joining

Since you have the Data already in a notebook its very easy to query stuff like whois etc at scale. meaning that if you do not get geoIP data from a login only the IP you can easily enrich it to contain the geoIP data and add it to a column meaning analysts no longer have to do this manually, this can be expanded to pretty much whatever idea you have.

you can also query stuff like service now via the API to automate the collection of users job roles/titles allowing you to use that to enhance investigations e.g a HR using net to query the domain admins group.

Sophisticated Data Processing, Machine Learning and Visualisation

Using Jupyter notebooks gives you access to many advanced python data science libarys, which greatly reduce the learning curve to deal with data science topics.

For example instead of detecting port scanning by looking for a device attempting to connect to an arbitrary number of hosts e.g 50 hosts on port 445 in an hour, we could calculate the mean and MAD and then look for a host connecting to double the amount of IPs on port 445 than it normally would.

Document Standardise and Share Detection Notebooks

Since one Jupyter notebook can be vendor agnostic and work with multiple EDR/SIEM vendors this allows us to standardise the data analysis to make sure everyone is hunting for the same activity. It is also easy to manage the notebooks via git allowing teams to work on and share notebooks with ease.

Being able to investigate the “gray areas”

EDR vendors always have to find the balance between detecting malicious activity and not sending lots of false positives for analysts to investigate. This means that they tend to lean towards the side of trigger an alert if something is likely to be malicious, meaning that if an attacker follows good OPSEC they may remain undetected in your environment. Since you can collect raw EDR telemetry this enables you to look at and identify things that are suspicious to your environment which may not necessary trigger an alert on EDR, this granular fine tuning means you may detect things that would go unalerted on EDR at scale. A good example of this could be a “nltest” and “net” process execution from a user/host in a short space of time or PSEXEC usage.

The How

I will not go into to much depth on how to actually install Jupyter since there are many ways to do it. Ranging from setting up your own Jupyter server to using Azure Maching Learning. For testing you could even do it locally on your host with visual studio code and install the Jupyter/python extensions.

So now we know why we should utilise Jupyter notebooks lets look at the how. I like the msticpy project since it makes querying EDRs painless and also has lots of additional build in helpful features such as IOC formatting and many more.

If you need to setup and obtain MDE API Credentials you can follow the guide from microsoft to get them here.

so lets start by installing msticpy, i’ll assume you already have a python environment setup and are using virtualenv or conda to manage it. If you are not it is pretty simple to setup a basic conda environment, run the following two commands. You can get MiniConda from here.

conda create ThreatHunting python=3.10

conda activate ThreatHuntingthen Installing msticpy is as simple as

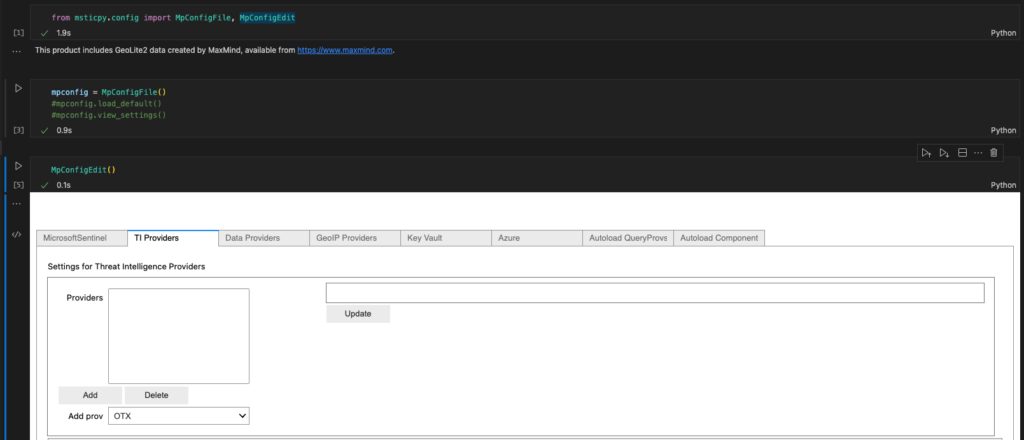

pip install "msticpy[azsentinel,azure]"Now we have msticpy installed we should create our msticpy.yaml file, this is the file where we will store credentials, msticpy has a built in msticypy editor that you can use by copying the code below. I’ve attached it in a basic notebook available here.

A basic msticpyconfig file without any CTI providers or custom queries that just connects to MDE will look something like this. Note that for production you will likely want to use the azure keyvault to store your credentials, this can easily be configured with MpConfigEdit()

msticpy:

FriendlyExceptions: True

DataProviders:

MicrosoftDefender:

Args:

ClientId: "i_am_a_client_id"

ClientSecret: "i_am_a_client_secret"

TenantId: "i_am_a_tennant_id"

UserName: "youremail@domain.com"

Cloud: "global"

IntegratedAuth: True

so now we have a working msticpyconfig.yaml file its time to create an environment variable called MSTICPYCONFIG, to do this in conda do the following

# Skip the first 2 lines if you already have setup conda env

conda create ThreatHunting python=3.10

conda activate ThreatHunting

cd $CONDA_PREFIX

mkdir -p ./etc/conda/activate.d

mkdir -p ./etc/conda/deactivate.d

touch ./etc/conda/activate.d/env_vars.sh

touch ./etc/conda/deactivate.d/env_vars.sh

echo "export MSTICPYCONFIG=/path/to/msticpyconfig.yaml" >> /etc/conda/activate.d/env_vars.shAlternatively you can also create a .msticpy folder in your home directory.

Putting It All Together

Now you should have the following.

- a msticpyconfig.yaml file setup

- MSTICPY installed and a environment variable setup

- some way of running jupyter notebooks

Now lets build a Jupyter notebook to connect to MDE and run a sample query.

This cell will import pandas and the msticpy query provider

import pandas as pd

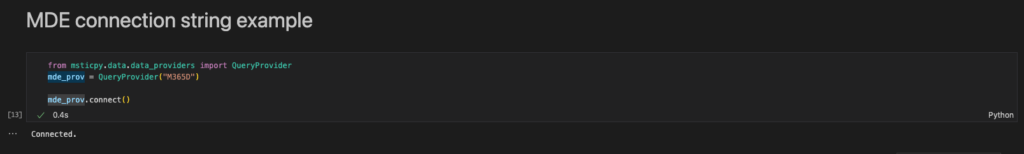

from msticpy.data.data_providers import QueryProviderThis cell will connect to Microsoft Defender for Endpoint using the credentials you stored in your msticpyconfig.yaml file, providing you set it correctly as an environment variable.

mde_prov = QueryProvider("M365D")

mde_prov.connect()now you should see a connected string, meaning that everything went well

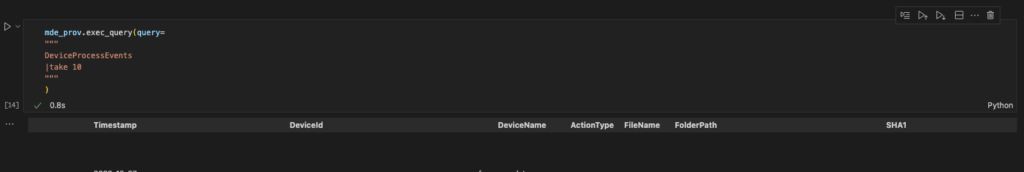

Now for the moment of truth, lets execute a basic query to get some results back.

mde_prov.exec_query(query=

"""

DeviceProcessEvents

|take 10

"""

)

Thats Everything, part 2 will go over creating custom hunting queries in MDE which you can run to get results directly within msticpy, along with a sample endpoint investigation notebook.

I’ve put the Jupyter notebook here if you want to save the effort of copying and pasting.